In the rapidly evolving landscape of digital content creation, the speech-to-video model introduced by Alibaba is a groundbreaking advancement that allows users to produce realistic animated digital humans simply from a portrait and an audio snippet. This innovative tool caters to a wide array of content creators, empowering them to generate lifelike AI avatars capable of speaking, singing, or performing in versatile environments. As part of Alibaba’s expansive Wan2.2 video generation technology series, this open-source model stresses accessibility for developers looking to elevate their projects. With features like sophisticated multi-character scene management and precise speech synchronization, the potential applications stretch from engaging social media content to more elaborate film productions. This robust system not only minimizes the computational need but also promises enriched video outputs that can significantly enhance viewer experience.

Alibaba’s latest innovation in video generation technology, particularly its open-source framework for transforming audio into dynamic visual performances, marks a significant step forward in content creation. Known as the speech-to-video model, this capability enables creators to easily animate digital representations from static images using sound input, ultimately resulting in versatile AI-generated avatars. The development of these animated figures supports various formats, from short snappy clips to longer cinematic productions, allowing for creative experimentation and storytelling. This emerging technology is particularly beneficial for designers and filmmakers, as it integrates seamlessly with existing open-source video tools, expanding the creative possibilities for animated storytelling. With such advancements, the future of virtual performances and digital characters appears more vibrant than ever.

Introduction to Alibaba’s Speech-to-Video Model

Alibaba has recently launched an innovative open-source speech-to-video model, Wan2.2-S2V, designed to revolutionize content creation by enabling the generation of animated digital humans from a single portrait. This groundbreaking tool allows users to create lifelike AI avatars that can effectively speak, sing, or perform, thereby catering to a diverse range of applications from social media visuals to cinematic projects. As a part of the Wan2.2 video generation series, this model is a significant leap forward in the way creators can utilize technology to produce compelling video content.

The introduction of the Wan2.2-S2V model aligns with the growing demand for immersive and interactive media experiences. Content creators and researchers alike are eager to explore the possibilities offered by this state-of-the-art video generation technology, which integrates Alibaba’s advanced speech synthesis capabilities. With the capability to animate portraits from varying angles and generate videos in both 480P and 720P resolutions, the platform provides a user-friendly environment that appeals to both amateur and professional creators.

The Technical Innovations Behind Wan2.2-S2V

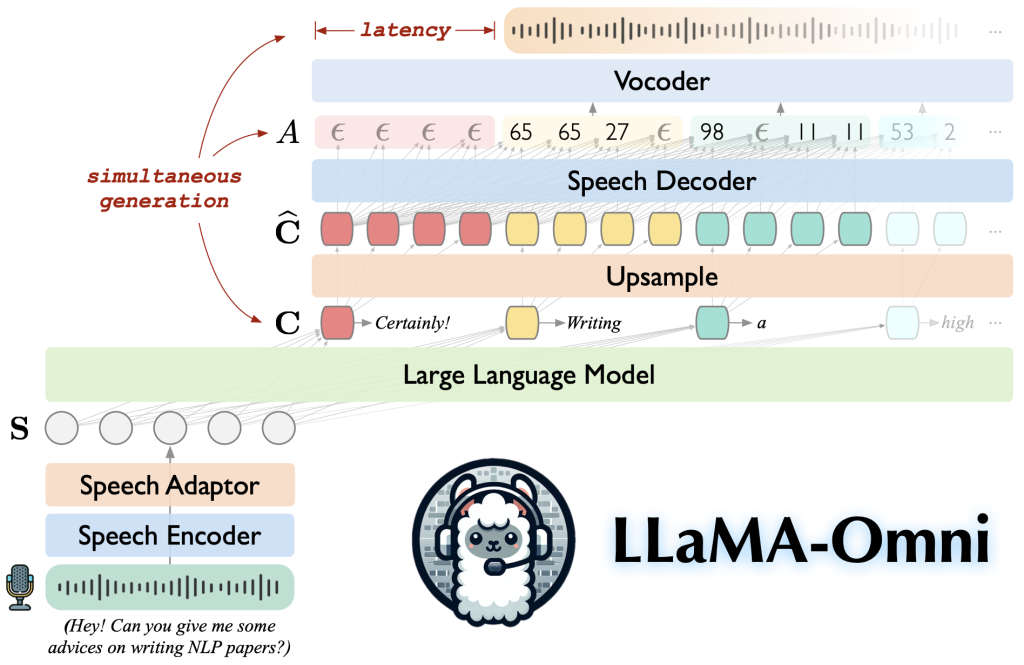

At the core of Alibaba’s Wan2.2-S2V model is audio-based animation technology that precisely synchronizes animated movements with spoken words. This feature is crucial for creating believable interactions in animated digital humans. The model is engineered to manage complex multi-character scenes which respond to specific gestures and interactions, offering dynamic storytelling opportunities that were previously difficult to achieve. This level of sophistication in video generation technology not only enhances the quality of the animations but also broadens the creative horizons for developers.

Moreover, the system leverages a custom audio-visual dataset inspired by film and television to improve the realism of animated outputs. By employing multi-resolution training techniques, developers can produce various video formats, from vertical short-form clips ideal for platforms like TikTok to traditional widescreen formats. The inclusion of a frame compression method that condenses extensive video histories into a single latent representation further reduces computational demands while maintaining visual consistency. This innovation directly addresses a common challenge faced by video generation tools and significantly optimizes performance.

Accessibility of Open-Source Video Tools

The decision by Alibaba to release Wan2.2-S2V as an open-source project marks a pivotal moment for the development of accessible video generation technology. By allowing developers to access and modify the model on platforms such as Hugging Face, GitHub, and Alibaba’s own ModelScope, a vast community of content creators can leverage this technology for various innovative uses. This open-source approach empowers users across different expertise levels to harness the potential of AI avatars, fostering a collaborative environment for advancements in animated digital content creation.

With over 6.9 million downloads of the Wan models, it is clear that the demand for such tools is on the rise. The accessibility of these powerful open-source video tools not only democratizes the animation process but also nurtures a vibrant ecosystem of creative experimentation. Independent creators, as well as larger production teams, can now explore the full capabilities of animated video without the need for significant investment in high-end computing resources—ultimately leading to a more diversified range of animated media.

Applications of Wan2.2-S2V in Content Creation

The versatility of the Wan2.2-S2V model opens up a myriad of applications for content creators. From crafting engaging social media clips to developing complex narratives for film projects, these animated digital humans can captivate audiences with their lifelike expressions and realistic movements. With capabilities to integrate speech synthesis seamlessly, creators can design characters that resonate with viewers on a personal level, enhancing storytelling in modern media formats.

Additionally, this technology provides endless opportunities for businesses to engage with their customers through personalized video messaging or interactive marketing campaigns. As companies look to differentiate themselves in a crowded market, employing AI avatars to represent their brand can provide a unique edge. The flexibility of the model, combined with its high-quality output, makes it an essential tool for anyone looking to make a significant impact in the digital space.

Challenges in Animated Video Generation Technology

Despite the remarkable advancements in animated video generation technology, challenges still exist that necessitate further innovation and research. One such challenge is the synchronization of dialogue with visual movements, which is crucial for maintaining audience immersion. While the Wan2.2-S2V model excels at speech synchronization, there are still nuances in human expression and timing that technology must continue to evolve to fully replicate.

Another significant hurdle involves managing the recommendations for animated sequences over extended periods. Many current systems struggle to stabilize animations throughout lengthy clips, often leading to inconsistencies that detract from the viewer’s experience. However, with Alibaba’s implementation of a frame compression method that reduces computational load while ensuring consistent output, breakthroughs in this area are underway. Continued exploration and enhancements will bring us closer to achieving seamless and breathtaking animated experiences.

Future Implications of AI Avatars

The future implications of using AI avatars, such as those produced by the Wan2.2-S2V model, extend far beyond traditional media; they hint at a transformative shift in how we interact with digital content. As technology continues to advance, we can anticipate a world where virtual characters will play integral roles in online interactions, from providing personalized customer service to engaging users in immersive virtual environments. This evolution presents a unique opportunity for businesses and creators to establish deeper connections with their audiences.

Moreover, the broadening scope of AI avatars raises important questions about ethics and responsibility in content creation. As these digital humans become more lifelike, ethical considerations regarding their usage in storytelling and representation will be paramount. Ensuring that these technologies are used responsibly to avoid misinformation and stereotypes will be an ongoing challenge for creators and platforms alike. The path forward will require collaboration across various sectors to ensure that realistic animations enrich our media landscape positively.

The Role of Community in Advancing Video Generation Technology

Community involvement plays a crucial role in shaping the evolution of video generation technologies like Wan2.2-S2V. The collaborative efforts of developers, researchers, and content creators contribute significantly to refining the model’s capabilities. By sharing insights, feedback, and innovative applications, the community can drive continual improvements that enhance the overall performance and user experience of the technology.

Furthermore, as users experiment with the open-source model, the results can lead to new features and adaptations that were not initially anticipated. This grassroots approach to development can spark creativity and experimentation that propels the industry forward. By driving collective growth and innovation, the community not only enhances the capabilities of AI avatars but also cultivates a culture of collaboration that can lead to groundbreaking discoveries in animated digital content.

Comparing Wan2.2-S2V with Competitors

When assessing Alibaba’s Wan2.2-S2V model, it’s essential to consider its position relative to competing technologies in the video generation landscape. Many existing models offer similar functionalities, yet few can match the seamless integration of speech synthesis and customizable animation features that characterize Wan2.2-S2V. By focusing on user accessibility and diverse production capabilities, Alibaba sets a notable standard in the market that may influence industry expectations.

Additionally, unlike several proprietary models that restrict access and adaptability, the open-source nature of Wan2.2-S2V encourages widespread experimentation and innovation. This strategic choice not only positions Alibaba favorably among developers but also fosters competition and drives continuous advancement in the sector. As new players enter the field, the challenge will be to match the high standards set by this model and expand upon its foundational technology.

Exploring the Impact of Open-Source Models on Innovation

The open-source model paradigm introduced by Alibaba with Wan2.2-S2V significantly impacts the landscape of innovation in video generation technology. By allowing developers to freely access and modify the software, Alibaba fosters an environment where groundbreaking ideas can surface and proliferate. This contrasts sharply with traditional proprietary systems that often stifle creativity due to access limitations and restrictive usage agreements.

This innovative approach encourages a more collaborative spirit among developers, as they can build upon one another’s work and share solutions to common challenges faced in video production. The collective knowledge and experience gained through community contributions not only speed up the development process but also enhance the quality of animated outputs. As this trend grows, it will likely inspire more companies to adopt open-source strategies, leading to a wave of new innovations in digital content creation.

Frequently Asked Questions

What is the purpose of Alibaba’s speech-to-video model Wan2.2-S2V?

The Wan2.2-S2V speech-to-video model developed by Alibaba aims to create animated digital humans from a single portrait and an audio clip. It’s designed for content creators and researchers who want to generate lifelike AI avatars that can speak, sing, or perform.

How does the audio-based animation technology in the speech-to-video model work?

The audio-based animation technology in Alibaba’s Wan2.2-S2V model precisely synchronizes speech with movement, allowing for the animation of portraits from various angles. This includes adaptations for specific gestures and multi-character scenes, making it a powerful tool in video generation technology.

What types of projects can benefit from using the Wan2.2-S2V speech-to-video model?

The Wan2.2-S2V speech-to-video model is suitable for a wide range of projects, including social media content and longer film-style projects. Its capability to manage intricate animations makes it ideal for both independent creators and professional teams.

Is Tencent’s Wan2.2-S2V model open-source?

Yes, Alibaba’s Wan2.2-S2V model is open-source, allowing developers to access and utilize the system to animate portraits. This openness promotes collaboration and enhances the possibilities within the field of animated digital humans.

What platforms can the new speech-to-video model be downloaded from?

The Wan2.2-S2V speech-to-video model can be downloaded from several platforms, including Hugging Face, GitHub, and Alibaba’s ModelScope, making it accessible for various users interested in AI video generation.

What are the output resolutions available with the speech-to-video model?

The Wan2.2-S2V speech-to-video model offers output resolutions of 480P and 720P, ensuring quality results without needing high-end computing power, ideal for many content creators and projects.

What kind of training data was used for the speech-to-video model?

For the Wan2.2-S2V speech-to-video model, researchers compiled a custom audio-visual dataset focused on film and television scenarios, enabling more realistic animations and helping the model understand complex interactions.

How does the model handle longer video sequences?

Wan2.2-S2V utilizes a frame compression method that condenses lengthy video histories into a single latent representation. This approach reduces computational demands while stabilizing longer sequences, which is a common challenge in video generation technology.

What advancements does Wan2.2-S2V offer compared to previous models?

Compared to earlier models in the Wan series, Wan2.2-S2V introduces advanced audio-based animation technology, improved multi-resolution training, and the ability to handle intricate scenes with specific gestures, enhancing its effectiveness for creating animated digital humans.

How many downloads have the Wan models achieved?

The Wan models, including the previous versions, have surpassed 6.9 million downloads across platforms like Hugging Face and ModelScope, indicating strong interest and usability in the community.

| Key Point | Details |

|---|---|

| Introduction of Wan2.2-S2V | Alibaba has launched a new open-source speech-to-video model that creates animated digital humans from a single portrait and audio clip. |

| Target Audience | Designed for content creators and researchers looking to create lifelike avatars that can talk, sing, or perform. |

| Animation Technology | Uses audio-based animation technology that synchronizes speech with movement effectively. |

| Multi-character Management | Can manage intricate scenes involving multiple characters and respond to gestures and environmental cues. |

| Output Video Quality | Offers resolutions of 480P and 720P, generating quality outputs without high-end computing requirements. |

| Training Dataset | Built on a custom audio-visual dataset focused on film and television scenarios. |

| Video Formats | Supports both vertical short-form and traditional widescreen outputs through multi-resolution training. |

| Compression Method | Utilizes frame compression to condense long video histories into a single latent representation. |

| Previous Releases | Follows earlier releases in the Wan series (Wan2.1 and Wan2.2), with over 6.9 million downloads. |

| Availability | Available for download on platforms like Hugging Face, GitHub, and Alibaba’s ModelScope. |

Summary

The speech-to-video model from Alibaba, Wan2.2-S2V, represents a significant advance in the creation of animated digital humans. This innovative tool allows creators to transform still portraits and audio into lifelike animations, offering a convenient solution for diverse video content creation. With its open-source nature and robust features—like audio-synchronized movements and multi-character scene management—this model is sure to attract the attention of both independent creators and larger teams looking for efficient video production methods. The comprehensive training it has undergone ensures high-quality output suitable for various formats, making it a game-changer in the world of video generation.